Year in Review: Data Centers Spur New Architectures

December 21, 2021 - Author: Bob Wheeler

We entered 2021 more concerned about demand than supply, but those concerns reversed by year-end. Demand was so strong that customers began to experience lead times beyond 52 weeks in some cases. Although capacity ultimately constrained 2021 growth, the situation bodes well for continued growth in 2022. Demand from hyperscale cloud-service providers (CSPs) is notoriously choppy, however, so growth never follows a straight line.

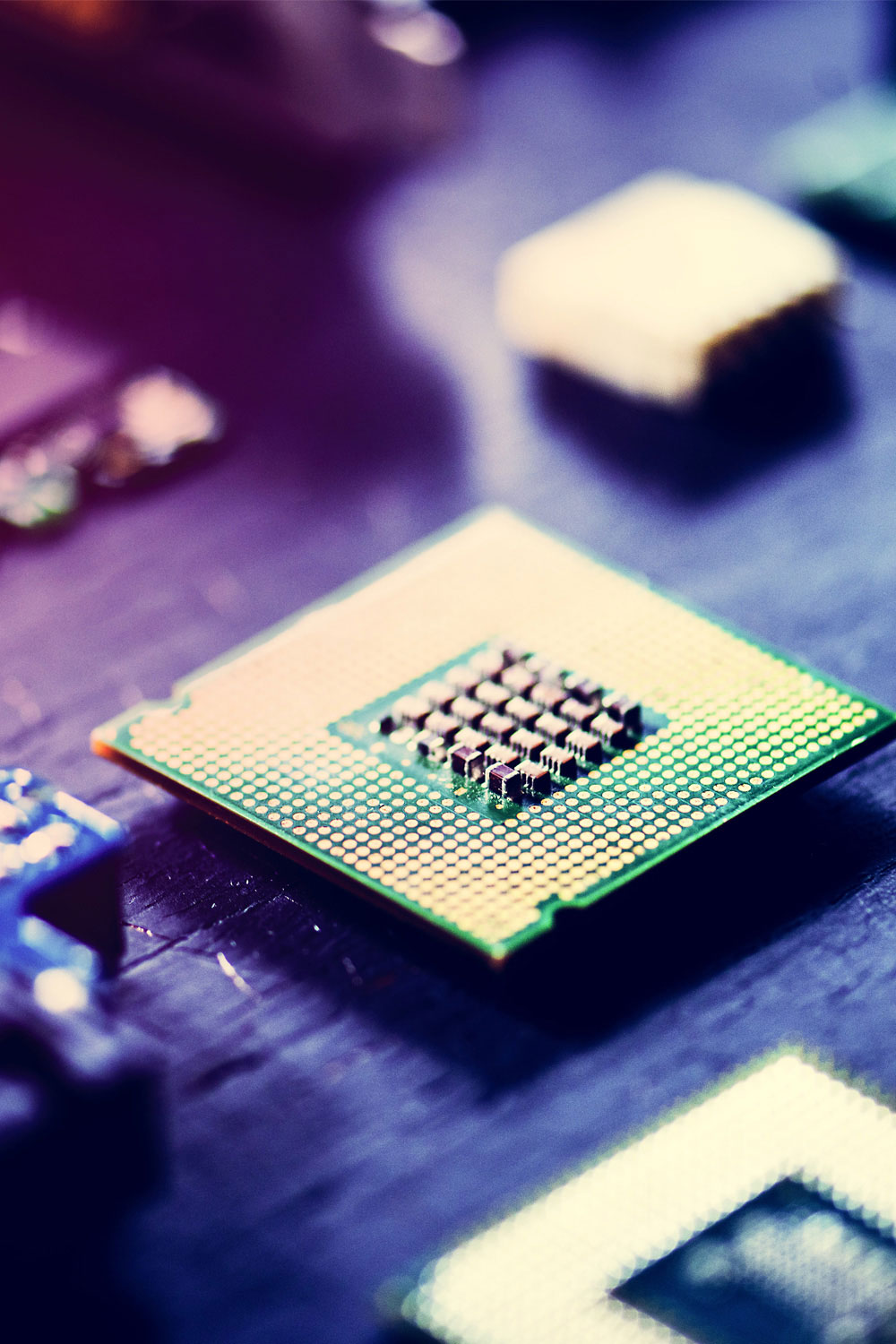

The year began as expected, with AMD introducing its third-generation Epyc (Milan) processor, quickly followed by Intel’s third-generation Xeon Scalable (Ice Lake-SP). These vendors then shifted their sights to 2022, with Intel revealing many details of the next-generation Sapphire Rapids processor and its associated new platform. AMD opened up about its 5nm Epyc generation, which will include a cloud-optimized version. Although CSPs predictably rolled out new compute instances based on the latest x86 processors, a few also developed in-house Arm-based server processors, including Amazon with its third-generation Graviton.

Following many 2020 introductions, the AI-accelerator market shifted to delivering on them in 2021. Much of the activity revolved around training, as many vendors offering inference accelerators targeted edge rather than data-center deployments. The dominant vendor for both training and inference, Nvidia, rolled out lower-power and lower-cost Ampere products rather than a new architecture. Startups that delivered first samples this year include Esperanto and Untether. MLCommons completed 1.0 releases of its MLPerf Inference and MLPerf Training benchmarks, providing an industry baseline for performance comparisons.

TSMC is looking forward to 2022, as AMD, Intel, and Nvidia plan to ship new products using its 5nm process. The new year will see packaging advances, too, including broader deployment of 3D chiplet integration. We’ll also see ongoing progress in data-center disaggregation, with CXL providing the foundation for separating compute resources from memory resources.

Subscribers can view the full article in the Microprocessor Report.