TimberAI Scales Down an Octave

Expedera’s new licensable deep-learning accelerator (DLA) performs simple neural networks for audio processing in a tiny engine that draws less than one milliwatt.

Bryon Moyer

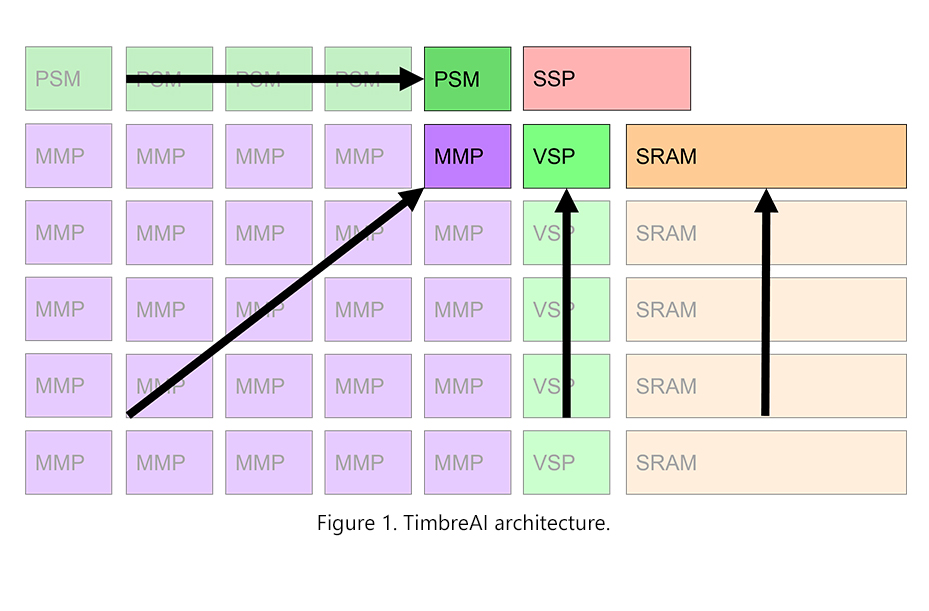

Expedera has produced a tiny deep-learning accelerator (DLA) for processing audio AI. The new TimbreAI T3, an intellectual-property (IP) block, occupies a mere 0.06mm2 in 22nm technology. Whereas popular neural-network models targeting audio for natural-language content require large Transformer-based models, TimbreAI provides simpler audio functions such as speech-to-text and audio-signal cleanup.

TimbreAI is a scaled-down version of the company’s Origin architecture. In 22nm, it can achieve 3.2 billion operations per second (GOPS) while drawing less than 300 microwatts. It’s generally available for design starts now.

The four-year-old startup, headquartered in Silicon Valley, completed an $18 million funding round last fall, led by Marvell. It has at least one design win for its Origin series that has reached production for low-light video de-noising in a smartphone. The customer remains unnamed but claims it achieved 20x the performance and half the power compared with an unspecified alternative implementation. In addition, Expedera has raised the maximum compute capacity of a single Origin core from 100 trillion to 128 trillion operations per second (TOPS) by adding both more tiles and more MACs per tile; it declined to give details.

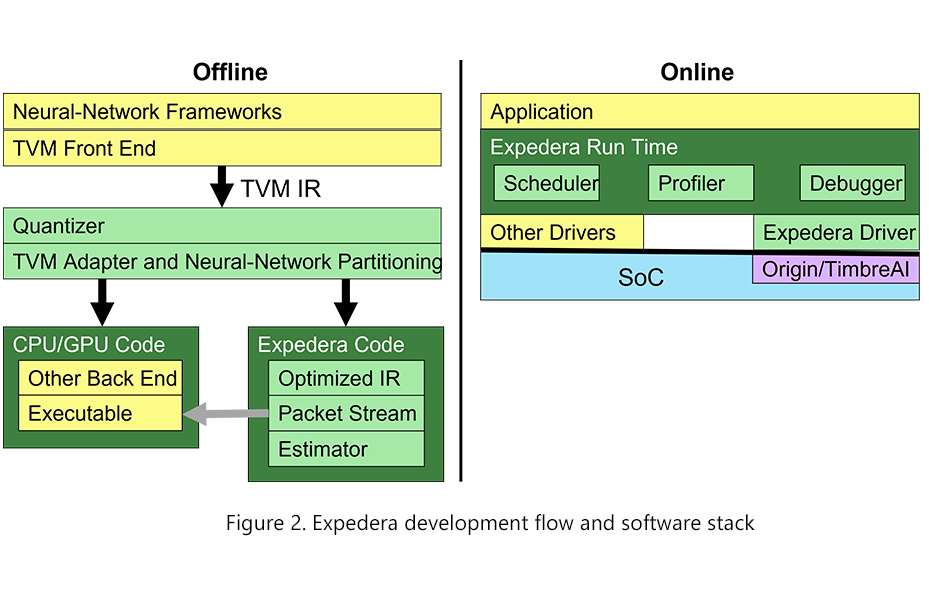

The company has also disclosed more about its software stack. The hardware engine natively handles certain high-level neural-network operators rather than having the development software compile them to low-level code. This simplifies the stack and reduces the instruction and data fetching necessary during execution, reducing power. The tools decompose unsupported operators to a lower level, allowing them to run on an Expedera DLA or CPU/GPU at the expense of efficiency.

Free Newsletter

Get the latest analysis of new developments in semiconductor market and research analysis.

Subscribers can view the full article in the TechInsights Platform.

You must be a subscriber to access the Manufacturing Analysis reports & services.

If you are not a subscriber, you should be! Enter your email below to contact us about access.